Carleton University

GEOM4003 - Remote Sensing of the Environment

Final exam from Winter 2011

Duration: 2 hours

10 known questions + 1 unknown

Notes:

- These are the potential exam questions supplied by the professor in the Winter 2011 term, and the answers were put together by students in that class as a study guide for the final.

- I am not responsible for the mark any person gets on a future GEOM4003 exam.

- These Q/A are from a GEOM4003 final exam given in a semester in 2011; I do not guarantee that any of them will be on a future version of any exam.

- I do not guarantee the accuracy of these Q/A.

- These potential questions were divided among six students and then compiled. The following answers may not be correct or complete.

Question 1 (6 marks): Solar radiation is scattered by the atmosphere. Identify and explain the three types of scattering processes that occur.

Answer: Scattering is the unpredictable diffusion of radiation by particles in the atmosphere. There are three types:

- Rayleigh scattering: Atmospheric particle size less than the wavelength of EMR. Shortest wavelengths are scattered.

- Mie scattering: Atmospheric particle size is equal to the wavelength of EMR. Longer wavelengths are scattered than by Rayleigh scattering.

- Non-selective scattering: Atmospheric particle size is greater than the wavelength of EMR. Scatters EMR of all wavelengths, thus is non selective.

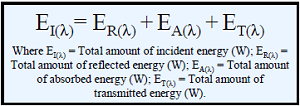

Question 2 (2 marks): State and describe the equation that describes the Earth’s shortwave energy balance (the terms you should use are incident energy, absorption, transmittance, and reflectance) The total amount of incident energy is equal to the total amount of reflected + total amount of absorbed + total amount of transmitted energy.

Question 3 (3 marks): What are atmospheric windows and why are they important to remote sensing?

Answer: Wavelengths where absorption does not occur are called atmospheric windows. These are important to remote sensing because they allow us to accurately measure surface characteristics within given wavelengths of EMR. Furthermore, users of remotely sensed imagery can target their analyses around these wavelengths and in turn produce high quality remotely sensed imagery products which characterize certain terrain for a given objective.

Question 4 (4 marks): Remote sensors measure the radiance of a ground target. Define the term radiance (giving the units of measurement) and explain why remote measurements of radiance only partially capture ground reflectance characteristics.

Answer: Sensors measure radiance: The amount of EMR reflected or emitted per unit source area per unit solid angle (Wm2sr-1). Remote measurements of radiance only partially capture ground reflectance characteristics because at sensor radiance also detects radiance from other non-target sources known as path radiance. These sources include atmospheric reflection and scattering as well as reflectance from other non target sources.

Question 5 (6 marks): Define the terms path radiance, target radiance and at-sensor radiance. Use a diagram to illustrate your answer.

Answer:

- At-sensor radiance: The total incoming radiation which arrives at the sensor which includes both target and path radiance.

- Target radiance: The radiance which is coming directly from the target area of interest which is being measured.

- Path radiance: Radiances from non target sources which can include atmospheric reflection and scattering, and reflectance from other non target sources.

Question 6 (2 marks): Define the terms surface reflectance and albedo, and give their units of measurement.

Answer: Albedo is the ratio of reflected radiation from a surface to incident radiation upon the surface; i.e. surface albedo refers to the fraction of incoming EMR that is reflected by the ground. Surface reflectance is dictated by the surface roughness compared to the wavelength of EMR. There are two primary types of surface reflectance:

- Diffuse: When wavelength is less than variations in surface height or surface particle sizes, reflection from surface is diffuse.

- Specular: Smoother surfaces are specular or near-specular reflectors.

Question 7 (3 marks): What is the bidirectional reflectance distribution function (BRDF) and how do we characterize it?

Answer: The bidirectional reflectance distribution function gives the target reflectance as a function of sun and view geometry. The BRDF can be characterized by taking multi-angular observations of a surface.

Question 8 (2 marks): Describe two ways / mechanisms to characterize the BRDF of a surface.

Answer: The bidirectional reflectance distribution function (BRDF) is characterized through multi-angular observations, whereby the reflectance of a surface is measured from all angles to produced a 3D model of its reflectance. One method of accomplishing this is through the use of a ground based goniometer, as in Figure 1 below. A second method is through the use of multiple-view airborne or satellite data, such as that provided by the MISR satellite.

Question 9 (7 marks): On a single graph, draw hypothetical spectral reflectance curves for healthy and unhealthy vegetation. Identify and explain the leaf physiological properties that lead to differences in these curves. Your diagram should include wavelengths stretching from the blue to the short-wave infrared. Make sure the B, G, R, NIR and SWIR wavebands are labeled on your x axis.

Answer: As seen in the figure below, we can see that, overall, unhealthy grass reflects much more across all wavelengths than healthy grass, except in the NIR, in which healthy grass reflects slightly more. Also, unhealthy grass features a trend of steadily increasing reflectance as measurements move from blue to SWIR, whereas healthy grass has many variations in reflectance levels. For example, reflectance off healthy grass increases from blue to green, then falls from green to red, before increasing sharply in NIR and falling again in SWIR. The higher absorption of blue and red wavelengths in healthy grass is a result of the chlorophyll content of the grass, which absorbs more blue and red radiation than green in the process of photosynthesis. Since unhealthy grass has lost its chlorophyll, it absorbs more green radiation and reflects more highly in the red. Differences in SWIR reflectance are a result of the water content of the grass. Healthy grass contains more water in its mesophyll layer, which absorbs SWIR radiation. Unhealthy grass, has dried out, and as such SWIR is reflected much more highly.

Question 10 (3 marks): What are the main plant physical characteristics that influence leaf reflectance in visible wavelengths?

Answer: The main physical characteristics that influence leaf reflectance in visible wavelengths are the pigment levels contained within the leaf. Chlorophyll A and be absorb blue and red wavelengths. Chlorophyll A and B are found in the plant’s mesophyll layer. Chlorophyll A and B do not absorb as much at green wavelengths as red and blue, which is why leaves appear to be green. When leaves are under stress or senesce, chlorophyll pigments begin to disappear, and reflectance in the red and blue wavelengths increases relative to green, so the leaves change colour.

Question 11 (1 marks): Plants reflect highly in the green portion of the electromagnetic spectrum. True or false? Justify your answer in one or two sentences.

Answer: False. Plants do not reflect highly in the green wavelength, they just reflect more green compared to blue and red, the other wavelengths in the visible spectrum.

Question 12 (3 marks): What are the main characteristics that influence plant canopy reflectance in visible wavelengths?

Answer: There are many factors that influence the reflectance of plant canopies in visible wavelengths. Some of these factors are measurement-dependent, and others are ecosystem-dependent. Measurement-dependent properties include sun angle, sensor geometry, spectral sensitivity of the sensor, and sensor instantaneous field of view (IFOV). Ecosystem-dependent properties include crown shape and diameter, trunk density and diameter, leaf area and angle, understory area and angle, and soil texture moisture and colour.

Question 13 (3 marks): What are spectral vegetation indices and why are they useful? Give an example.

Answer: Spectral vegetation indices are mathematical algorithms that attempt to enhance vegetation signals in satellite data while minimizing background noise. They attempt to correct for various sources of error such as atmospheric conditions, sun and view angle, topographic slope and soil variation. We use vegetation indices to construct accurate models of biophysical conditions. The presence of background noise can often lead an analyst to a false conclusion about vegetation conditions. Therefore, it is critical that vegetation data be corrected through the use of a vegetation index. An example of a vegetation index is the Normalized Difference Vegetation Index (NDVI).

Question 14 (5 marks): What are the ideal properties of a “good” vegetation index? Do indices always meet these properties? Give an example to illustrate your point.

Answer: A good vegetation index should maximize sensitivity to plant biophysical parameters over a wide range of vegetation conditions, normalized effects of sun and view angle or atmosphere for consistent spatio-temporal comparisons, normalize effects of canopy background variation such as slope, soil variation and woody material, and be coupled to a specific measureable biophysical parameter such as biomass or water content. VIs do not always meet these properties. An example is the SAVI index, which fails to accurately normalize the effects of view angle.

Question 15 (6 marks): Identify and briefly explain the factors that influence the spectral characteristics of natural lake water bodies. Compare these factors with those of deep ocean water.

Answer: Natural lakes often contain low-mineral water, and can have near-ideal water reflectance curves, especially if they are surrounded by wind barriers and thus have relatively smooth surfaces. However, the spectral characteristics depend in part on the depth of the water, which if shallow can allow radiation to reflect off the bottom. This is in contrast to deep ocean water on all three levels. Open ocean water is deep, has zero wind barriers, and has high mineral content.

Question 16 (5 marks): The total at-sensor radiance over a water body is the sum of four sources of EMR. One of these sources is the subsurface volumetric radiance of the water. (a) List and describe the other three sources of EMR that contribute to at-sensor radiance over water; and (b) Specify the equation you would use to calculate the subsurface volumetric radiance (Hint: the other terms in the equation are the total at-sensor radiance and the three radiances you identified in (a)).

Answer: Not answered.

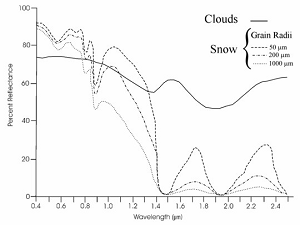

Question 17 (5 marks): On a single graph, draw hypothetical spectral reflectance curves for snow and clouds. Your diagram should include wavelengths stretching from the blue to the short-wave infrared. Using your diagram, explain what wavelengths are more suitable for distinguishing between snow and cloud. Make sure the B, G, R, NIR and SWIR wavebands are labeled on your x axis.

Answer:

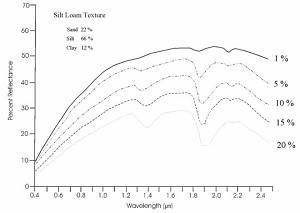

Question 18 (3 marks): On a single graph, draw hypothetical spectral reflectance curves for dry and wet soil. Your diagram should include wavelengths stretching from the blue to the short-wave infrared. Make sure the B, G, R, NIR and SWIR wavebands are labeled on your x axis.

Answer:

Question 19 (6 marks): Explain the term “signal-to-noise ratio” and explain why it is important. List the factors that influence the signal-to-noise ratio recorded by an optical sensor at a given time.

Answer: A signal-to-noise ratio is a measure of how much of a signal has been corrupted by noise (i.e. the higher the ratio the cleaner the signal). In the case of an optical sensor, it refers to how much of each pixel value was generated by incoming radiation versus how much was caused by effects including:

- Fixed pattern noise

- Physical aberrations of digital sensor chip

- Long exposure

- Low ISO speed

- Random noise

- Short exposure

- High ISO speed

Question 20 (Y marks): Explain why sensors measuring in broad wavebands can make spectral measurements at finer spatial resolutions compared to sensors measuring in narrow wavebands.

Answer: Not answered.

Question 21 (3 marks): What is the main difference between multispectral and hyperspectral remote sensing? How do spectral curves derived from these two remote sensing types differ?

Answer: Multispectral sensors measure a few very narrow wavebands, whereas hyperspectral processing takes into account many wavebands, totaling a very wide swath of spectrum.

Question 22 (4 marks): Line or column striping is a problem often encountered in multispectral remote sensing. Describe two methods for correcting line or column striping.

Answer: Multispectral remote sensing is defined as the collection of reflected, emitted, or back-scattered energy from an object or area of interest in multiple bands (regions) of the electromagnetic spectrum. One problem that may occur is line or column stripping. This effect could be removed by local average and/or histogram normalization methods.

Local averaging – pixels in the defective line are replaced with an average of values of the neighbouring pixels in adjacent lines just above and below. In other words replace bad pixels with values based upon the average of adjacent pixels not influenced by striping; this approach is founded upon the notion that the missing value is probably quite similar to the pixels that are nearby.

Histogram normalization – data from all lines are accumulated at intervals of n-lines, the histogram for defective defectors displays an average different from the others. This strategy is to replace bad pixels with new values based upon the mean and standard deviation of the band in question, upon statistics developed for each detector assuming that it overall statistics for the mission data must resemble those from the good detectors.

Question 23 (3 marks): Is it necessary to undertake atmospheric correction when undertaking an image classification using single-date imagery? Explain your answer.

Answer: No, it is not necessary to perform atmospheric correction on a single date of remotely sensed data. This is because atmospherically correcting a single date of imagery is often equivalent to subtracting a constant from all pixels in a spectral band, suggesting that as long as the training data from the image to be classified have the same relative scale, atmospheric correction has little effect on classification accuracy.

Question 24 (6 marks): Describe two methods of undertaking atmospheric correction on a satellite image.

Answer: There are several ways to atmospherically correct remotely sensed data. Two major types of atmospheric correction are absolute atmospheric correction and relative atmospheric correction.

The general goal of absolute radiometric correction is to turn the digital brightness values (or DN) recorded by a remote sensing system into scaled surface reflectance values. These values can then be compared or used in conjunction with scaled surface reflectance values obtained anywhere else on the planet.

- Radiative transfer models – Realistic estimates effects of atmospheric scattering and absorption on satellite imagery. User can incorporate ground radiometer measurements from in situ data (single spectrum enhancement).

- Empirical line calibration forces image data to match in situ spectral data obtained at same time and date as image.

Relative atmospheric correction is often used when the information for the absolute atmospheric correction is not available. For a single-image, normalize using histogram adjustment. If histograms are shifted to left so that zero values appear in data, effect of atmospheric scatter can be minimized but does not correct for atmospheric attenuation (absorption).

For multi-data image, normalize using regression where a base image is selected and other images are transformed to radiometric scale of this image. Regression coefficient applied to each pixel in other dates to normalize images.

Question 25 (6 marks): List and describe the three types of interpolation used to correct the geometric distortion in satellite images.

Answer: Geometric distortions are an error on an image and are classified into internal distortion resulting from the geometry of the sensor, and external distortions resulting from the altitude of the sensor or the shape of the object. There are three methods of geometric correction (interpolation) as mentioned below:

- Systematic correction – When the geometric reference data or the geometry of sensor are given or measured, the geometric distortion can be theoretically or systematically avoided. For example, the geometry of a lens camera is given by the collinearity equation with calibrated focal length parameters of lens distortions. The tangent correction for an optical mechanical scanner is a type of system correction.

- Non-systematic correction – Polynomials to transform from a geographic coordinate system to an image coordinate system, or vice versa, will be determined with given coordinates of ground control points using the least square method. The accuracy depends on the order of the polynomials, and the number and distribution of ground control points.

- Combine method – First the systematic correction is applied, then the residual errors will be reduced using lower order polynomials. Usually the goal of geometric correction is to obtain an error within plus or minus one pixel of its true position.

Question 26 (9 marks): We often express the accuracy of a classification in terms of (a) user accuracy, and (b) producer accuracy.

- Explain these two terms.

- Is it possible for a classification to have a high producer accuracy, but a low user accuracy? Explain your answer.

- Is it possible for a classification to have a high user accuracy, but a low producer accuracy? Explain your answer.

Answer:

- User’s Accuracy – The probability that a pixel classified as a particular habitat on the image is actually that habitat. Accuracy from the point of view of a map user (not a map maker). How often is the type the map says should be there really there? Can be computed (and reported) for each thematic class. User accuracy = 100 – commission error. User’s accuracies are computed by dividing the number of correctly classified pixels in each category by the total number of pixels that were classified in that category (the row total).

- Producer’s Accuracy – map accuracy from the point of view of the map maker (producer). The probability that a pixel in a given habitat category will have been classified correctly on the image. How often are real features on the ground correctly shown on the map? Can be computed (and reported) for each thematic class. Producer accuracy = 100 – omission error. Producer’s accuracies result from dividing the number of correctly classified pixels in each category (the column total). It is calculated by dividing the number of correct pixels for a class by the actual number of ground truth pixels for that class.

- Yes, it is possible for a classification to have a high producer accuracy, but a low user accuracy. What this would indicate is that the analyst did a good job of training the classifier using the calibration information available, but the resultant classification was poor, based on information from the validation pixels. Yes, it is possible for a classification to have a high user accuracy, but a low producer accuracy. You can have high user’s accuracy when the error of commission is low but also have low producer’s accuracy when you have higher error of omission.

Question 27 (4 marks): You have been given red and near-infrared spectral Landsat TM bands for a study region. You plan to (a) use the image bands to create an NDVI image for the study area; (b) define a regression relationship between pixel NDVI values to measurements of biomass taken in the field; and (c) apply this relationship to all pixels in the image to create a map of biomass for the study area. Do you need to do an atmospheric correction on your red and near-infrared image bands? Explain your answer.

Answer:

- NDVI is a commonly used vegetation index that is calculated: NDVI = (NIR- red)/(NIR + red), where NDVI = Normalized Difference Vegetation Index, NIR = Near Infrared, as the NDVI measure has been found to make it easier to differentiate between vegetation types and has also been useful in biomass estimation.

- NDVI is a unitless measure with a positive correlation to vegetation amount or health. A nonlinear trendline may result from the saturation of NDVI values levels of high biomass. At levels of high biomass, the sensor is unable to pick up further variations in the NIR, leading to the nonlinear trend. This nonlinear trend has been observed often over forested environments. Another explanation is that the NDVI – biomass relationship is actually a linear relationship, but only appears to be nonlinear because the full range of biomass values have not been sampled (i.e. if we had sampled over a wider range of biomass values, then the relationship would be linear).

- ???

Question 28 (3 marks): What are the advantages of using histogram-equalized contrast stretches over simple linear contrast stretches?

Answer: Histogram equalization modeling techniques provide a sophisticated method for modifying the dynamic range and contrast of an image by altering that image such that its intensity histogram has a desired shape. Unlike contrast stretching, histogram modeling operators may employ non-linear and non-monotonic transfer functions to map between pixel intensity values in the input and output images. Histogram equalization employs a monotonic, non-linear mapping which re-assigns the intensity values of pixels in the input image such that the output image contains a uniform distribution of intensities (i.e. a flat histogram). This technique is used in image comparison processes (because it is effective in detail enhancement) and in the correction of non-linear effects introduced by, say, a digitizer or display system. It maximizes the information content of the image.

Question 29 (4 marks): Explain the concepts of low-frequency and high-frequency filtering in the spatial domain.

Answer: Low spatial frequencies occur when pixel values change gradually. The filter emphasizes the low frequency. Simple filter: use pixel brightness to make a new brightness filter based on means. “Denoise”, blur more severe as window size increases. High spatial frequencies occur when pixel values change rapidly. The filter emphasizes the high frequency. These filters usually applied to enhance local variations. Can be applied with weighted windows or by subtracting LFF from twice original pixel value.

Question 30 (2 marks): What is the main difference between the Sobel Edge Detector filter and other filters?

Answer: The Sobel Edge Detector filter simultaneously uses two 3x3 filters, is non-linear edge detection, and a pixel is considered an edge if it exceeds some user-specified threshold. The Sobel Edge Detector filter is used to create edge maps, where white lines (edges) appear on a black background. Furthermore, the Sobel Edge Detector calculates the gradient of the image, giving the direction of largest increase from light to dark and rate of change.

Question 31 (6 marks): Explain the concept of Principal Components Analysis (PCA) and explain why it is useful in remote sensing.

Answer: PCA is a methodology to reduce the number of redundant variables into Principal Components (main ones). Reduces information in n-band data set into fewer bands while maintaining as much information as possible. Principle Components can be used in place of original data. This method is great at simplifying data for modeling purposes (biophysical lab).

Question 32 (4 marks): Explain the concept of image fusion / pan-sharpening. Describe one technique for undertaking image fusion / pan-sharpening.

Answer: Image fusion combines many images to add more information than available in one image. It can occur at the pixel, feature or decision processing level. Pan sharpening transforms coarse spatial resolution multi-spectral to fine spatial resolution colour by fusing with fine spatial resolution (black and white) the Brovey Method limited to 3 bands, significant colour distortion.

Question 33 (4 marks): When undertaking a supervised classification, what steps should one take to make sure that a training data set most accurately represents the spectral variation present within each information class.

Answer: Ensure training areas are:

- cover enough pixels to encompass total variation (minimum 100 pixels)

- 5-10 training fields through image

- smaller training fields capture variability better compared to a few large ones but must be large enough to accurately estimate spectral properties. Too large though and may have undesirable variation

- should be located through image, careful about edges to avoid transitional regions

- ensure data within training classes are uniform and non bimodal. If the class is not spectrally uniform, maybe better training with 2 classes and then merged after. Afterwards, inspect frequency histograms to note bimodal tendencies or skewness. Split training classes if necessary.

Question 34 (2 marks): Explain how the analysis of training class histograms can improve the definition of training classes for a supervised classification.

Answer: Training class histograms show frequency distribution of spectral data for a given classification. Also allows bimodaly to be identified, data split into subclasses if necessary.

Question 35 (8 marks): Describe how the following supervised classifications work: (a) Box classifier; (b) Minimum distance to means classifier; (c) Nearest neighbour classifier; and (d) Maximum likelihood classifier.

Answer:

- based upon range of pixel values from training area. Class boundary defined by box around class mean in spectral space (one band vs another) (usually ±1SD. Pixel is classified based upon which box it falls in. Classified if unknown if not in any box. Fast but problems if class ranges overlap

- uses mean pixel value in training classes. A pixel is classified by computing the distance between the value and each of class averages. Classified to nearest class mean. Unknown if beyond some predefined distance. Fast, but insensitive to different quantities of variance in spectral response, not used when spectral classes are similar and high variance.

- uses value of nearest pixel in training data (and distance to pixel), pixel is classified based on training class which is closest in spectral distance. Nearest-neighbour, k- nearest neighbour and k-nearest-neighbour distance weighted. Slow because distance calculations between pixel and all pixels in training data. Not used when training data is not well separated in spectral space.

- assumes training data and classes are normally distributed. Each class has a probability density function that says the probability of a pixel belonging to a class. Pixel is assigned to most likely class, or unknown if below some threshold. Slow!! Not always effective.

Question 36 (1 marks): Maximum likelihood classifiers always produce better classifications than any other classifier. True or False?

Answer: False. It is computationally intensive and does not always produce superiour results.

Question 37 (3 marks): List three advantages and three disadvantages of supervised classification.

Answer: Advantages of Supervised Classification:

- Analyst defines informational classes for a specific purpose within study region.

- Allows comparison to other classifications of same or neighboring regions.

- Classification is tied to specific areas of known identity (training areas).

- Analyst may be able to detect serious errors by examining training data.

Disadvantages of Supervised Classification:

- Analyst imposes a classification structure upon the data.

- Training data is defined on informational classes and not spectral classes.

- Training data may not be representative of entire image conditions.

Question 38 (2 marks): What is fuzzy classification, and what is its main advantage over traditional “crisp logic” classification?

Answer: Fuzzy supervised classification allows “mixed pixels” to be partial members of many classes. E.g. Pixel is 0.3 = water; 0.7 = forest. Crisp logic assigns one thematic class to a pixel, ensuring many pixels will be in error to some degree.

Question 39 (2 marks): Why do we often undertake post-classification smoothing after we generate an image classification?.

Answer: Traditional per-pixel classifiers may lead to “salt and pepper” appearance. It is often necessary to smooth the classified output to show only the dominant classification.

Question 40 (5 marks): List and briefly explain the five remote sensing considerations when doing a multi-temporal change detection analysis.

Answer: Remote Sensing Considerations when using multiple image dates:

- Temporal resolution; approximately the same time of day, same anniversary dates, etc. Eliminates duirnal and seasonal sun angle affects that can confuse the interpretation of multi-date imagery.

- Look angle; influence of look angle on surface reflectance can be significant (different effects of forward scatter and back-scatter).

- Spatial resolution; Spatial resolution is determined by the features to be observed. Imagery should be registered to common map projection with high accuracy.

- Spectral resolution; Spectral resolution of sensor used should be sufficient to sense reflectances in spectral regions of interest. The same sensor should be used to collect data on multiple dates.

- Radiometric resolution; Output from sensors reach the analyst as a set of digital numbers (DN). Each value is recorded as a series of binary digits (bits; 8 bits = 1 byte). Range of brightness values in an image is given by the number of bits available. Should thus keep constant when using multiple image dates.

Question 41 (4 marks): What are the differences between image differencing and image ratioing techniques for undertaking change detection analysis? Under what circumstances would you choose to use the image differencing technique? Under what circumstances would you choose to use the image ratioing technique?

Answer: Difference is absolute vs relative change. Small absolute changes can be important because they may indicate large relative changes on the landscape. Image differencing subtracts a first-date image from a second date image pixel-by-pixel. A pixel that has not changed yields a value of 0. Image ratioing ratios a first-date image to a second-date image pixel-by-pixel. A pixel that has not changed yields a value of 1. Use image differencing for identifying regions of change and no-change. Data such as vegetation indices (e.g. NDVI: Vegetation Index Differencing). Use image ratioing for reducing impacts of sun angle, shadow and topography. Cannot be used to define thresholds.

Question 42 (3 marks): List three advantages that microwave remote sensing has over optical remote sensing methods.

Answer:

- Can penetrate the atmosphere under most conditions, including cloud, allowing all-weather remote sensing.

- Give different view of terrain compared to visible and thermal imaging.

- May penetrate vegetation, sand, and surface layers of snow.

- Has own illumination, and the angle of illumination can be controlled.

- Enables resolution to be independent of distance to the object (SAR).

- May operate simultaneously in several wavelengths (frequencies), having multi-frequency potential.

- Can produce overlapping images for stereoscopic viewing and RADARgrammetry.

- Supports interferometric operation using two antennas for 3-D mapping.

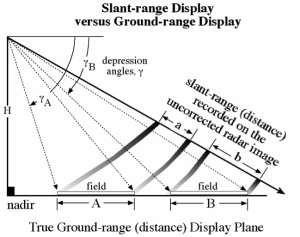

Question 43 (4 marks): Explain the differences between (a) ground-range, and (b) slant-range in microwave remote sensing. Will two objects 100m apart in the slant range be 100m apart in the ground range?

Answer:

- Ground-range geometry: Proper (x,y) position relative to one another.

- Slant-range geometry: Uncorrected images displayed as slant-range, the straight-line distance between RADAR and the target. Can be converted to ground range using Pythagorean theorem.

- No? but the slant-range will be closer to the ground-range distance as the distance from the sensor increases.

Question 44 (6 marks): What are three types of geometric distortion that occur in microwave imagery? Explain each distortion.

Answer: Relief displacement in RADAR images is in direction towards Radar antenna.

- Foreshortening: When terrain that sloped towards RADAR appears compressed. Difficult to correct. Influenced by objects height (higher object= greater foreshortening), depression angle (greater depression = greater foreshortening), location of objects across track range (objects near range are foreshortened more that in far range).

- Layover: Is an extreme case of foreshortening. Occurs when the incident angle is small the foreslope. Causes summits of tall features to lay over their bases. Cannot be corrected even if surface topography is known. Care must be taken when interpreting areas where layover exists (mountainous).

- Shadow: Obscure important information, no information is recorded in shadowed areas. Features casting shadows in far range may have backslope illumination in near range. Shadow can provide important information on landscape features.

Question 45 (4 marks): What surface environmental factors affect the strength of RADAR back-scatter?

Answer:

- Surface roughness characteristics

- Sensor properties (λ, depression angle, polarization)

- Surface electrical characteristics

- Vegetation characteristics

- Surface moisture characteristics